|

Back to Blog

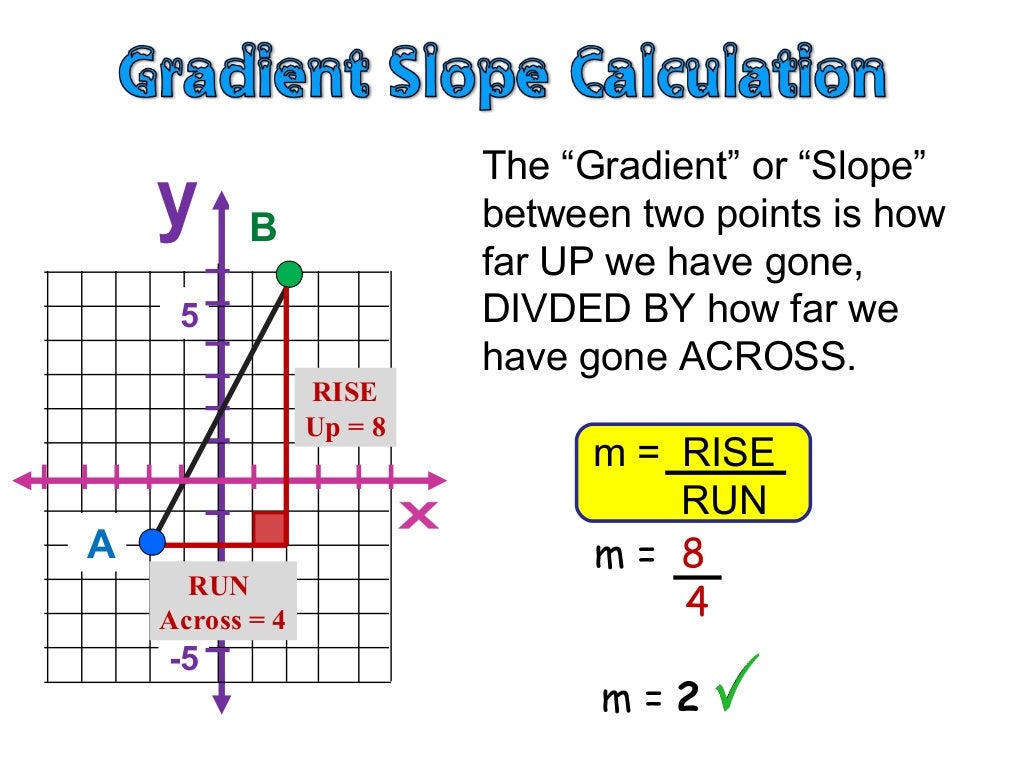

Define gradient7/3/2023 RNN is a good example for why accumulating gradient (instead of refreshing) is useful, but I guess new users wouldn’t even know that backward() is accumulating gradient I think it would be useful to explain this a little more in beginner-level tutorials. Official tutorials like 60 Minute Blitz or PyTorch with Examples both say nothing about why one needs to call _() during training. This function accumulates gradients in the leaves - you might need to zero them before calling it.īut this is quite hard to find and pretty confusing for (say) tensorflow users. I also got confused by this “zeroing gradient” when first learning pytorch. x = Variable(torch.Tensor(]), requires_grad=True) X = Variable(torch.Tensor(]), requires_grad=True)Ĭalling x._() before y.backward() can make sure x.grad is exactly the same as current y’(x), not a sum of y’(x) in all previous iterations. In the following example, y.backward() is called 5 times, so the final value of x.grad will be 5*cos(0)=5. It actually adds y’(x) to the current value of x.grad (think it as x.grad += true_gradient). Y.backward() doesn’t just assign the value of y’(x) to x.grad (say y depends on x). The drawback here is that you have to manually reset the values to 0 so that the gradients computed previously do not interfere with the ones you are currently computing. So the fact that the gradients are accumulated allows you to get the correct gradient for all the computations that you do with a given Variable even if you use it at multiple places in convoluted ways. # Now each parameter in "feature_extractor" contains d(loss1)/dw + d(loss2)/dw # This will add gradients in "task2" and accumulate in "feature_extractor" # Perform the second task and get the gradients for it as well # So each parameter "w" in "feature_extractor" has it gradient d(loss1)/dw # This add the gradients wrt loss1 in both the "task1" net and the "feature_extractor" net # Compute first loss and get the gradients for it So it is trickier to automatically set the gradients to 0 because you don’t know when a computation end, and when a new starts.Īn example where the gradient accumulation is useful is for example if you share some part of a network for two different tasks: input = Variable(data) In this scheme, there is a not a single point where you stop performing “forward” operations and you know that the only thing that is left to be done is compute the gradients. This means that you can get the gradients wrt a variable, then perform computation with it again, then recompute gradients corresponding to these new operations. In pytorch, it is significantly more flexible as the autograd engine will just “remember” how to compute the gradient for a given variable while you are performing computations with this Variable.

And then you just tell it to do it using a given input/target. Since you use a static graph, you define exactly what should be done to make one gradient computation/update.

I think the big difference with tensorflow is the following.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed